The Implementation Problem in Parkinson's AI: Lessons from Cardiology

A patient visits his primary care physician because of a tremor that has been bothering him for months. He notices it when holding a cup of coffee. His spouse has started to notice it when he uses his phone. On exam, there is subtle asymmetry, though the findings are not dramatic. The visit is scheduled for fifteen minutes. Blood pressure needs adjustment. Chronic back pain is discussed. Laboratory results require review. Within that compressed clinical space, the tremor falls into a gray zone. It could represent essential tremor or reflect early Parkinson's disease. The level of certainty may remain limited at this stage.

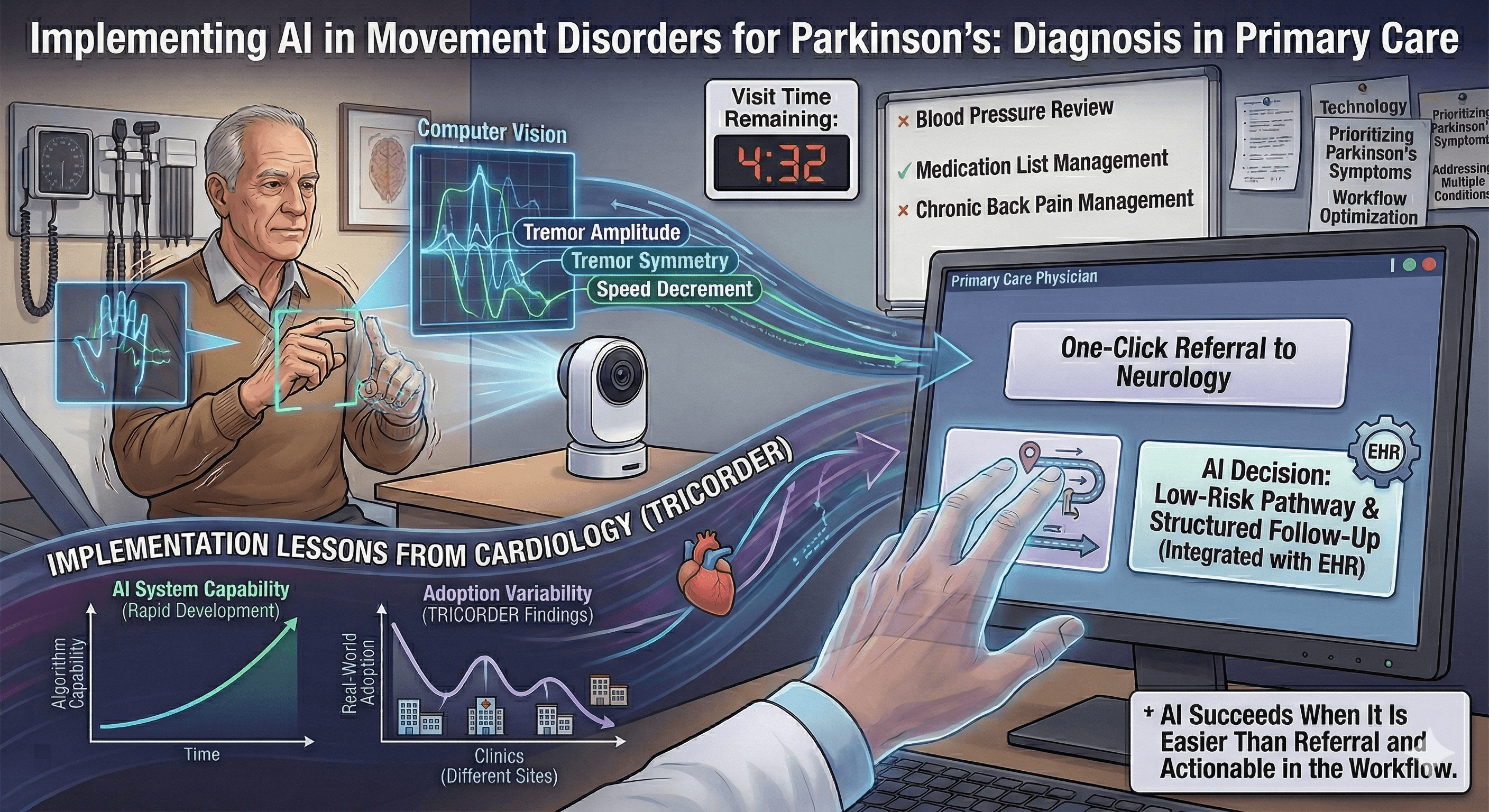

Now imagine a small camera becomes part of that visit. The patient performs a brief structured movement task. The system quantifies tremor frequency and amplitude, evaluates asymmetry, and measures speed and decrement during repetitive movements. A probability estimate appears, supporting differentiation among tremor syndromes and highlighting features consistent with parkinsonism. Computer vision approaches that attempt this are advancing steadily in research settings, and translational pathways toward clinical tools are taking shape. As these systems move closer to routine care, the question shifts. The issue becomes less about discrimination in a curated dataset and more about how the tool behaves once it enters everyday clinical practice.

A recent large pragmatic trial in cardiology, the TRICORDER study, offers a useful perspective. In that study, primary care practices received an AI-enabled cardiovascular examination device intended to support earlier identification of heart failure and related conditions. The evaluation spanned twelve months and relied on routine clinical coding to measure outcomes. Because the device was embedded directly into routine workflows across many sites, the trial captured how adoption unfolded over time. Some practices used the device frequently and made it part of standard assessment. Others used it occasionally. In several sites, use declined as competing demands shaped the clinic day.

When investigators focused on patients examined with the device, detection rates were higher. When they examined outcomes across the entire practice populations assigned to receive the technology, the overall difference was smaller. The results reflected both the algorithm's capability and the consistency with which clinicians incorporated it into practice.

That structure matters for Parkinson's disease. A tremor differentiation or bradykinesia assessment tool may align closely with expert diagnosis in carefully selected cohorts. Its effect in community practice will depend on how consistently it is used, how seamlessly it integrates into documentation, and whether its outputs influence referral and follow-up decisions. Clinician engagement becomes part of the outcome. Concentration of use within a subset of clinics shapes what appears at the population level.

Longer pragmatic evaluations are particularly informative in this context because they allow adoption patterns to settle. They show how technology behaves after initial curiosity fades and daily workflow reasserts itself. They reveal whether use remains broadly distributed or clusters among clinicians who are especially motivated. For tools intended to extend diagnostic support beyond tertiary centers, that system-level view carries weight.

Parkinson's disease introduces an additional challenge because its diagnosis evolves over time. Clinical certainty often strengthens over time. Early diagnostic labels may shift as atypical features emerge or as longitudinal observation clarifies the phenotype. When computer vision systems are trained or validated against baseline clinical diagnoses, they inherit this temporal instability. Some apparent disagreement between model output and recorded diagnosis reflects the natural progression of understanding rather than simple measurement error. Emerging pathology-linked biomarkers may provide stronger biological anchoring for subsets of Parkinson's disease and refine classification. Their integration also introduces threshold decisions and spectrum considerations that require evaluation across diverse populations.

Generalizability further shapes implementation. Many computer vision datasets originate in tertiary movement disorder centers, where prevalence is higher and expertise is concentrated. Community neurology and primary care operate in environments with different pretest probabilities, documentation practices, and referral pathways. Probability thresholds calibrated in specialty settings may require adjustment in lower-prevalence contexts. A clear definition of intended use, whether for triage, diagnostic support, or subspecialty refinement, helps align evaluation strategies with deployment goals.

Workflow ultimately determines durability. In primary care, the baseline behavior already exists. When uncertainty arises, the clinician can click a referral to neurology or to a movement disorders specialist. That action requires little additional time and transfers diagnostic responsibility to a subspecialist. Any tremor-differentiation or bradykinesia-assessment device competes directly with that click. For consistent use, it must either be simpler than placing a referral or enable an immediate decision the primary care physician feels comfortable making. That decision might involve reassurance, initiation of a low-risk intervention, or structured follow-up, with confidence that the action will help the patient and is unlikely to cause harm. If the device introduces additional steps, unfamiliar probability outputs, or uncertainty about next steps, referral remains the path of least resistance. Adoption then concentrates among clinicians who are particularly interested or motivated, and population-level impact narrows accordingly. A system that integrates seamlessly into the electronic health record, generates structured documentation automatically, and links directly to an actionable pathway is more likely to be used over the long term.

The TRICORDER study illustrates how technical capability and clinical systems intersect over time. Parkinson's AI development is advancing, and this is the moment to align innovation with implementation discipline. Tools for tremor differentiation and bradykinesia assessment will succeed at scale when they are easier than referral, actionable within the visit, calibrated to the evolving diagnostic reality, and evaluated across the settings where most patients first seek care. The design challenge is no longer limited to the model. It now extends to the system that surrounds it.